Addressing private IPv4 shortage: 5 Strategies for Amazon EKS

Everyone who works with Amazon EKS has already faced the challenge of IPv4 scarcity. We will explore 5 solutions to mitigate or to end this problem.

Context

In Kubernetes, you have to use a Container Network Interface plugin (CNI) to allow pods to communicate with each other with their respective IP addresses.

When using Amazon EKS, by default you get the VPC Container Network Interface (CNI). This plugin is installed as a daemonset in the cluster.

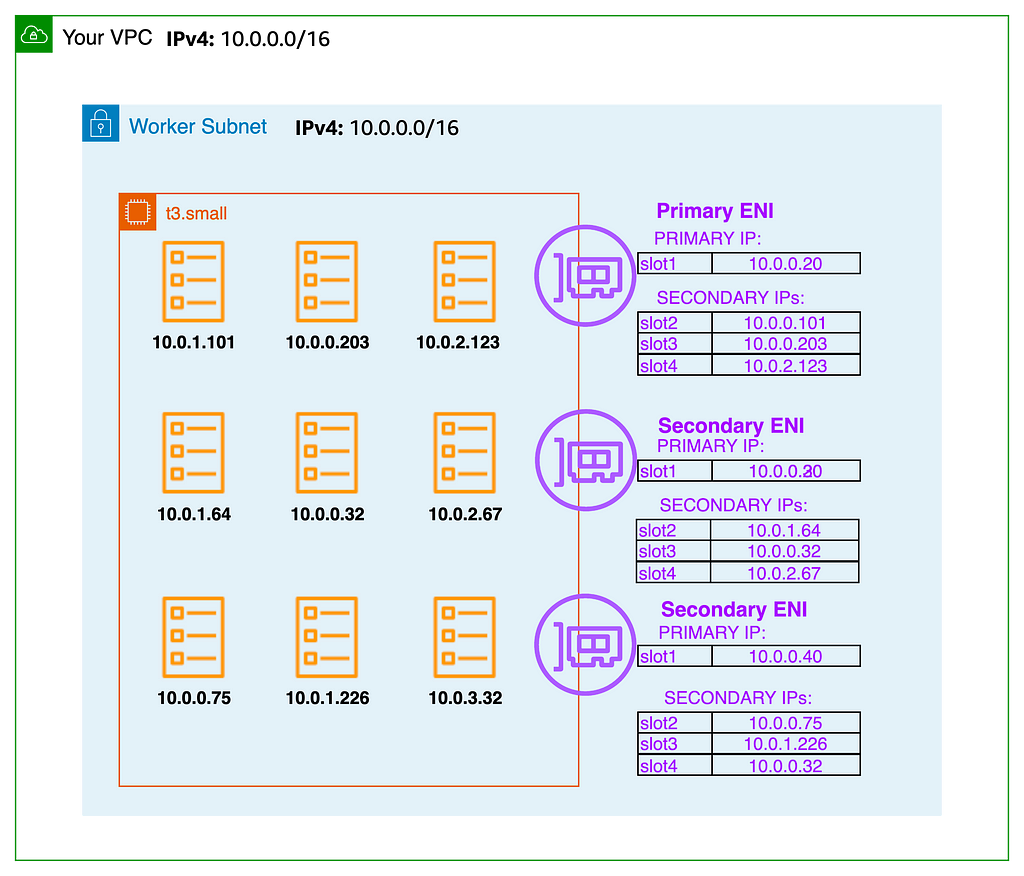

EKS Networking with VPC CNI uses AWS VPC resources such as Elastic Network Interfaces (ENI) and IP addresses that exist in the VPC and subnets. The CNI plugin allows Kubernetes Pods to have the same IP address as they do on the VPC network.

It provisions one secondary IP address (an ENI has 1 primary and multiple secondary ones) for each pod in the cluster, which can take up a lot of IP space in your subnets.

Why not use another CNI?

You can disable native AWS IPs provisioning by using another CNI. IPv4 management will work in the following way:

- IP addresses of pods are scoped to the internal network

- Pods are not directly reachable from outside the cluster

- Every pod uses the primary node ENI

There are many benefits you lose by dropping the VPC CNI:

- Security group for pods

- Pod identification in VPC flow logs

- Pod readiness gate with load balancer controller

- Simpler networking: no overlay network, you use the network provided by Amazon VPC

We will focus in this article on keeping the usage of VPC CNI.

An easy change: VPC CNI tweaking

There are several environment variables used by the VPC CNI to configure its behavior. We will address WARM_ENI_TARGET and WARM_IP_TARGET.

WARM_ENI_TARGET: Specifies the number of free elastic network interfaces and all of their available IP addresses. For WARM_ENI_TARGET = 1, A full ENI of unused IP addresses will be provisioned.

The maximum number of IPs by ENI is tied to the ec2 instance type. For example, there are 15 IPs on an ENI for an m6a.xlarge. You can find the reference in the AWS Documentation.

Unless you have specific requirements, I would advise you to set WARM_ENI_TARGET to 0.

WARM_IP_TARGET: Specifies the number of free IP addresses that should be kept at all times on all interfaces.

When a pod is scheduled on a node, if there are no IP addresses available on all ENIs, there can be an added delay before the pod starts because the VPC CNI plugin is provisioning resources (by calling AssignPrivateIpAddress).

Due to this risk, it is a good practice to set WARM_IP_TARGET to at least 1.

When using prefix delegation: use subnet CIDR reservation

With the VPC CNI, you have the ability to configure IPv4 allocation to be either

- Individual secondary IPv4 addresses (the default mode)

- Or whole /28 prefixes: Prefix delegation (used when you need larger pod density per node)

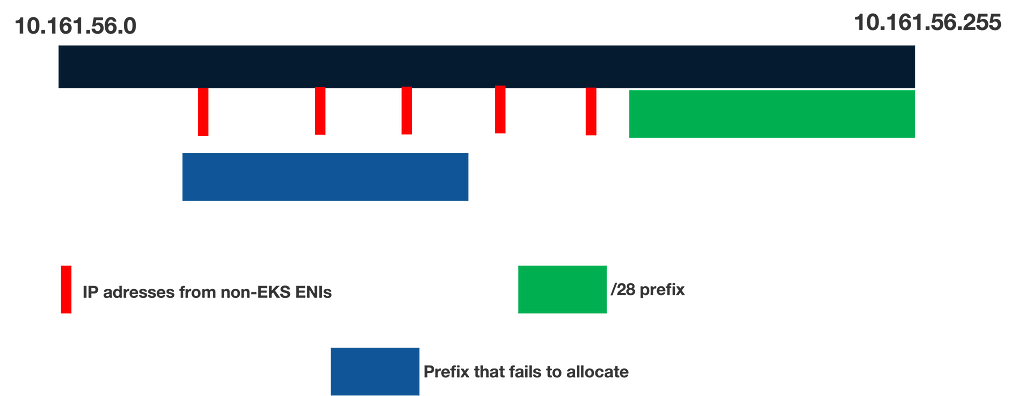

For a prefix to be allocated, a whole /28 range should be available. If at least one IPv4 has been taken beforehand, the prefix cannot be allocated. If there is no contiguous free /28 prefix in your subnet, the allocation fails and your pod will not start (even if there are hundreds of IPs available)

The diagram below outlines this situation:

One solution to overcome this problem is using VPC subnet CIDR reservation. This feature allows to pre-allocate many CIDR ranges, that can only be used by whole /28 prefixes and not individual IP addresses

You can learn more about this feature in the AWS documentation

If you still have IP scarcity issues even after these 2 first improvements, the following ones have more impact but require more work to achieve.

VPC CNI Custom Networking using Internal SNAT

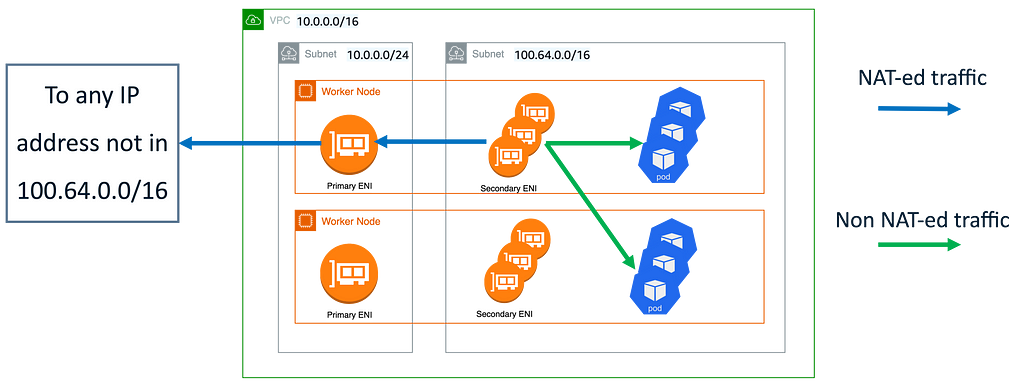

The following setup involves 2 features of VPC CNI

- Custom Networking: allows to have a separate ENI configuration between pods and their node. Practically, we will configure all pods to ENIs in another subnet than the one used by the node

- Internal SNAT: routes the outbound traffic from pods to anywhere outside their subnet through the primary IP of the node.

The diagram below shows an overview of the solution:

- We create dedicated subnets for the pods. These ones should not be routable from outside the VPC (we can call these subnets internal)

- New subnets can have any CIDR block, but a good choice is to use CG-NAT space because companies don’t usually use these ranges for private networks.

- Use custom networking use the new subnets for pods ENIs (more information on EKS best practices guide)

- Internal SNAT is enabled by default when using custom networking (see AWS documentation)

With this configuration:

- Network flow from pod to pod will not be NAT-ed. You will see pods IPs in the VPC flow logs

- Network flow from a load balancer in the same VPC to any pod is possible without any NAT

- Network flow from a pod to an address IP not associated with a pod is NAT-ed through the primary node ENI.

- Ingress traffic from outside the VPC to the pods should pass through a load balancer (a.k.a layer 4 proxy) located in non-internal subnets

- You can create as many subnets with overlapping CIDR in different VPCs as you want.

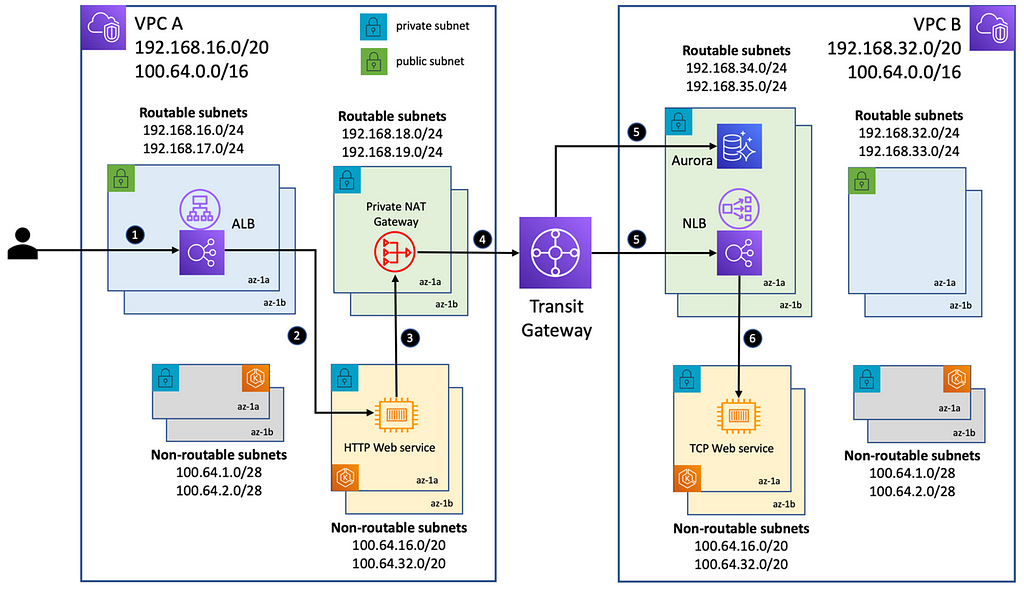

NATted data-plane with private NAT gateway

A more recent solution to address the issue is using private NAT gateways, described in this blog post.

Since mid-2021, you can create private NAT gateways, allowing you to NAT the entire EKS data plane:

- You create nonroutable subnets (preferably with CIDR ranges from CG-NAT space), but this time for hosting all the data place IPs (pods and nodes)

- This removes the need for Kubernetes-specific configuration to separate node and pods ENIs

There is a caveat to keep in mind: you get charged per private NAT gateway and for data processing costs for VPC-to-VPC communication. This has to be put in perspective with your whole bill for the EKS infrastructure.

IPv6

The most future-proof solution to solve IPv4 exhaustion — but the most disruptive — is setting up IPv6.

Here are some advantages of using IPv6:

- The network infrastructure is simpler: network flow does not need to go through a NAT gateway. Your pods are assigned a public IPv6 address, this simplifies troubleshooting.

- Cost reduction: IPv6 to public IPv6 traffic does not charge you for NAT gateway data processing.

- You can still keep compatibility with existing IPv4-only infrastructure using NAT64/DNS64

There are 2 ways to run IPv6 currently on EKS.

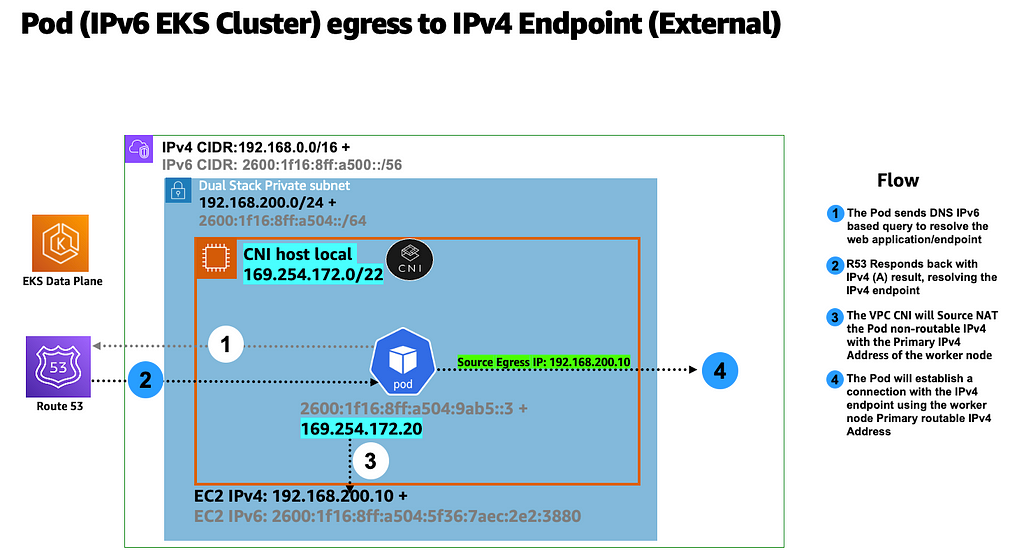

The first one is using dual-stack subnets for the data plane (discussed in the EKS best practices website):

- Every pod has only an IPv6 address

- The node has an IPv4 and an IPv6 address

- For egress traffic from a pod to an IPv4 address, the behavior is similar to using custom networking, the traffic goes through the primary node IP address.

The second one uses IPv6-only subnets:

- This currently requires to use NAT64/DNS64 for nodes and pods to be able to communicate with the API server because the EKS API server does not yet support IPv6. This is one reason that explains why EKS is not officially IPv6 only

- This solution is preferred as it does not involve custom logic with the host's local CNI, and NAT64/DNS64 is widely supported by AWS.

Conclusion

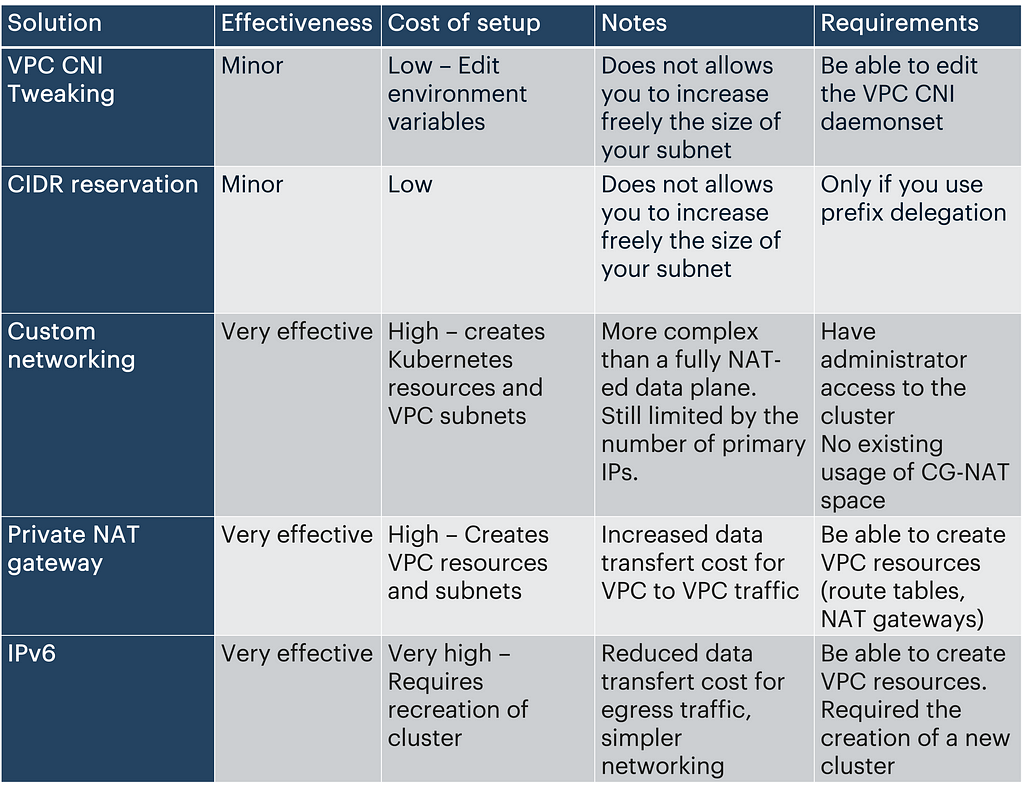

Here is a matrix summarising the main characteristics of each solution:

Addressing private IPv4 shortage : 5 Strategies for Amazon EKS was originally published in ekino-france on Medium, where people are continuing the conversation by highlighting and responding to this story.