The “AI revolution” is everywhere. Artificial intelligence is increasingly finding its way into our tools, our conversations, and our lives. This technology brings a host of new uses that would have been unimaginable just a decade ago. Today, you can generate poetry in the style of Leopardi or craft an art-house film with nothing more than the tap of your fingers on a keyboard. You can even discover a loyal lover, available and brimming with knowledge, hidden behind a simple stream of computerized electricity.

Nevertheless, while the benefits of this technology are undeniable, a darker side remains. This flip side, often mistakenly dismissed as techno-pessimism, raises a host of important questions we have yet to answer. And it is these answers, sometimes technical, sometimes moral, that will determine whether AI becomes truly democratized or not.

The purpose of this article is not to be needlessly pessimistic, but to build an inventory of the problems that we, as a society, must address in order to effectively carry forward this AI revolution. To make this inventory more accessible, each problem is framed as an unanswered question, supported by a reasoned explanation. Additionally, suggested media resources are provided for each problem, if you wish to explore any of them further.

It is important to note that this article does not offer solutions. The breadth of the topics covered requires expertise in each of these fields to legitimately propose answers. Lacking such polymath expertise, this article takes a documentary approach. Blind spots may still persist in these reflections, so this inventory is not exhaustive.

Also, this article is trying to focus mainly on the France context. Some problems will have more details relative to this country especially and some sources are in French.

Ecologic and energy problems

Problem 1

How can we prevent AI from actively contributing to a future water crisis?

In 2019, Google built additional data centers in Arizona, which led to a Time article strongly emphasizing that these facilities consumed far too much water in areas already affected by drought. Arizona, already one of the hardest-hit states, found itself embroiled in political battles to maintain water supply for its residents.

This water crisis has not improved over time, as recent droughts have shown. Meanwhile, AI is driving the need for more data centers, and therefore, ever more water. When correlated with IPCC forecasts predicting increasingly frequent droughts, the future seems headed toward a water war to decide whether it is the residents or the machines that should be kept hydrated.

Problem 2

How can we reconcile the energy consumption of data centers with the ecological crisis?

AI requires a large number of graphics processors to be trained and to operate. As a result, energy demand has surged in an unusual way, so much so that coal plants have been reopened to prevent Americans from facing power outages. Multiple investment plans have been launched to supply more energy and keep data centers running. However, these plans take years to complete, leaving a significant increase in fossil fuel consumption in the meantime.

Closer to home, after the French government urged us to practice energy sobriety to avoid winter blackouts (a measure that has now become an ecological lever to protect the environment), Alsace is currently negotiating to host a Microsoft data center whose energy consumption is estimated to equal that of 350,000 households. Meanwhile, Hauts-de-France aims to become a recognized AI hub, and Île-de-France is set to welcome its share of data centers — all under the guise of tax reductions on electricity. Adding to the concern, climate-related investments dropped by 5% between 2023 and 2024. In short, we are witnessing efforts to shield AI companies so that France can become a major player in this field, at the expense of stronger ecological commitments.

More informations on these problems:

- [EN] We did the math on AI’s energy footprint. Here’s the story you haven’t heard. — J. O’Donnell, C. Crownhart — MIT Technology Review — 20 mai 2025

- [FR] Les impacts de l’IA sur l’environnement — F. Garcia, S. Schbath — Annales des Mines — Mars 2025

- [EN] The Uneven Distribution of AI’s Environmental Impacts — S. Ren, A. Wierman — Harvard Business Review — 15 Juillet 2024

Financial problem

Problem 3

How can we build a stable AI economy that is independent of financial investments?

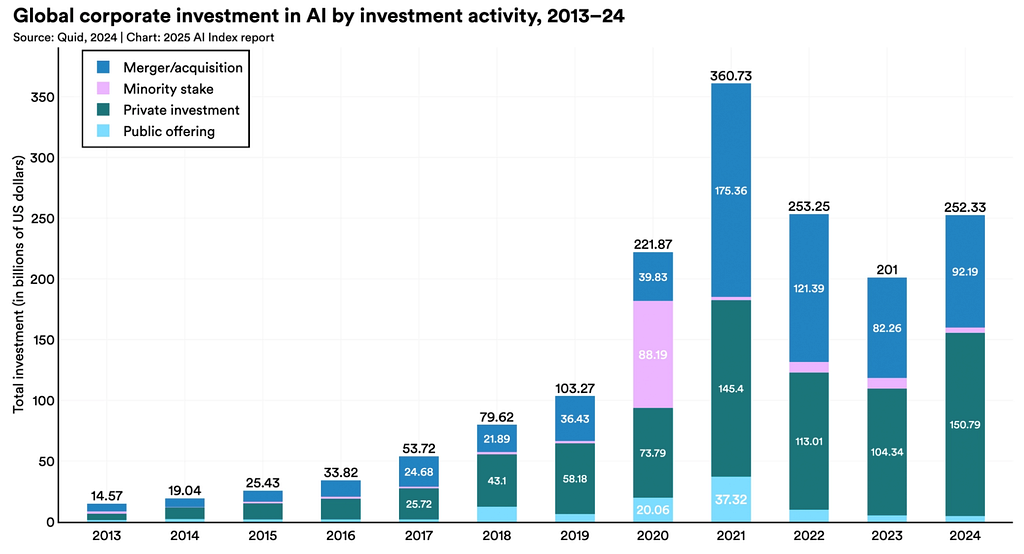

According to chart (G1), AI is characterized by high levels of funding, reaching $250 billion in 2024. The first noteworthy point is that this figure reflects strong investor interest in the field, but also the growing amount of money required to develop increasingly powerful models. Training costs are expected to exceed €1 billion by 2027.

The second insight from this chart is the trend in investments over recent years. While 2021 was an exceptional year in terms of funding, AI investments have remained stable at around $250 billion since then. In contrast, global investments in Cleantech have declined since 2021, dropping from €51.7 billion to €31.8 billion in 2024. More broadly, the AI sector is absorbing a significant share of investments at the expense of other industries. In the first quarter of 2025 in the United States, AI accounted for 71% of total investments. Meanwhile, in France, the President announced a €109 billion investment package in AI, financed primarily by the United Arab Emirates.

At the same time, several Bloomberg articles have highlighted the circular financing that largely underpins AI from a financial perspective, once again drawing attention to the existence of an AI bubble (chart G2). Even Sam Altman, CEO of OpenAI, has spoken about this investment bubble, and its burst could severely damage the U.S. economy. Faced with these risks, investors are already revising their strategies to avoid experiencing a Dotcom 2.0-style collapse.

More information on this problem:

- [EN] The 2025 AI Index Report: Chapter 4: Economy — HAI Stanford University — Avril 2025

- [EN] The real (economic) AI apocalypse is nigh — C. Doctorow — Pluralistic — 27 Septembre 2025

- [EN] 3 reasons everyone is talking about an AI bubble — A. Tecotzky — Business Insider — 26 Août 2025

Work-Related Issues

Problem 4

How can we boost productivity through AI without reducing workers’ well-being?

Probably the most anticipated impact by investors, “artificial intelligence […] has the potential to be as transformative as the steam engine was during the 19th-century industrial revolution” according to a McKinsey article citing a McKinsey study. In theory, AI is a technology that could revolutionize the way we work and become a powerful tool to help us tackle various challenges. Its capabilities make it an ideal assistant for drafting meeting notes or retrieving scattered information from a set of documents. However, in practice, short-term improvements have yielded mixed results.

In terms of productivity, there are as many positive cases as negative ones. In the short term, it is therefore difficult to assess AI’s impact on employees’ daily work, and broader studies are needed to provide that answer. Nevertheless, one article has already shed light on the phenomenon of “AI workslop” — documents that appear well-crafted but whose content is actually sloppy and superficial. This practice simply and strictly reduces team productivity.

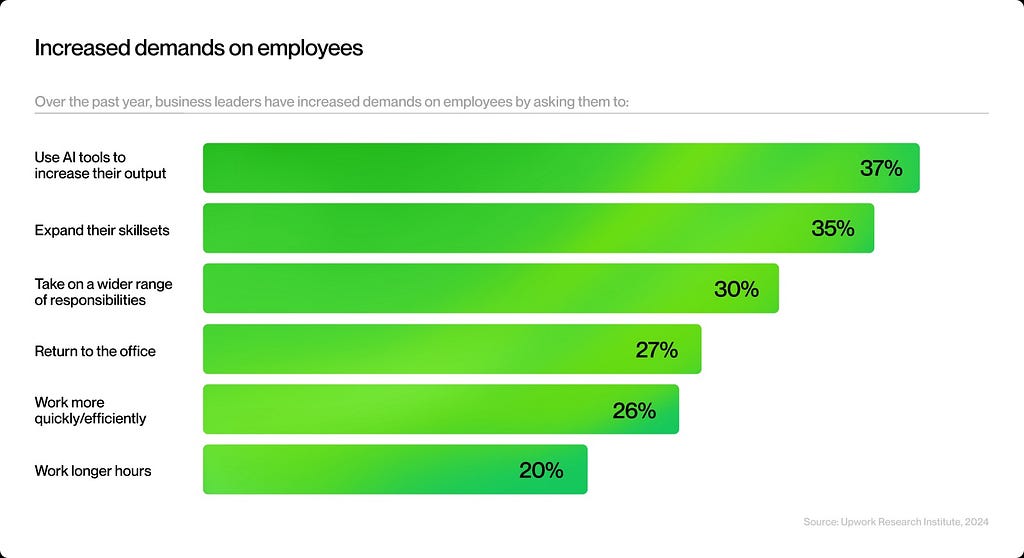

When it comes to employee well-being, we see both unchanged working conditions in German companies and deteriorated conditions in American-based ones (according to chart G3). One possible explanation for this difference could be the much stronger job security in German companies compared to English-speaking ones, reducing the pressure for significantly increased productivity to keep one’s job. Consequently, integrating AI into workplaces would require strong labor legislation and a healthy work environment to prevent a rise in burnout cases.

More information on this problem:

- [EN] Artificial intelligence and the wellbeing of workers — O. Giuntella, J. Konig, L. Stella — Nature — 23 Juin 2025

- [EN] Does AI actually boost productivity? The evidence is murky — J. Whittle — The Conversation — 10 Juillet 2025

Problem 5

How can we ensure that workers in jobs eliminated by AI are able to make a living?

The fear of job loss caused by the rise of AI is anything but an illusion. Amazon, UPS, HP, Cisco, Duolingo, and Klarna are examples of massive layoffs explicitly attributed to AI. These cases, in a tech sector already heavily impacted by waves of layoffs in recent years, only add to the constant anxiety shared by tech workers.

However, it’s worth noting that these examples involve mainly American companies, where, as explained in the previous problem, job security is weaker. Looking at the French context, the most well-known case of layoffs in favor of AI is Onclusive. The company reportedly dismissed 217 employees citing AI as the reason, though this claim is contested. Moreover, a ruling by the Nanterre Judicial Court on February 14, 2025, seems to set an initial direction for implementing AI in companies while safeguarding jobs. Still, we must wait for a first ruling from the Court of Cassation to definitively confirm this trend.

Outside the tech sector, this constant anxiety is also present. Workers in fields such as graphic design, translation, audio, photography — i.e., creative professions — are now widely affected by declining incomes. And the reason is simple: why pay someone €100 for a handmade image when a ChatGPT subscription costs three times less and allows unlimited image generation? Apply this reasoning to music, and you get a crisis in a market already weakened by the rise of music streaming platforms.

The only way to counter the harmful effects of this progressive destruction of jobs is “simple”: we need to rethink how our society functions. Without jobs, people cannot earn money. And without money, people cannot live. Our current societal model is therefore incompatible with the consequences of AI on creative fields , and these consequences will continue to worsen relentlessly.

More information on this problem:

- [EN] AI Slop is destroying the Internet — Kurzgesagt — In a Nutshell — Youtube — Octobre 2025

- [EN] The Simple Macroeconomics of AI — D. Acemoglu — MIT — 5 Avril 2024

- [FR] Vers une nouvelle conception du travail ? — F. Kauder — aamulumi.info — 2 Octobre 2019

Problem 6

How can we continue to train experienced professionals when entry-level positions are disappearing?

When considering jobs directly impacted by AI, there is a clear decline in hiring for entry-level positions. This decline is not observed among more experienced workers. While this trend may seem temporary in the short term, it’s important to remember that without beginners, there can’t be experts.

It’s easy to illustrate the consequences of a lack of training with something we know well in France: the shortage of doctors. The numerus clausus policy limited the number of people trained in medicine between 1970 and 2020. As the population grew and medical density declined, this lack of training for newcomers created a highly strained healthcare situation. A similar scenario already exists in IT, with a shortage of COBOL specialists creating an alarming climate for the banking sector. And if we stop training new professionals, the “COBOL-ization” of jobs could spread even further.

More information on this problem:

- [EN] The Perils of Using AI to Replace Entry-Level Jobs — A. C. Edmondson, T. Chamorro-Premuzic — Harvard Business Review — 16 Septembre 2025

- [EN] Canaries in the Coal Mine? Six Facts about the Recent Employment Effect of Artificial Intelligence — E. Brynjolfsson, B. Chandar, R. Chen — HAI Stanford University — Novembre 2025

- [EN] New evidence strongly suggests AI is killing jobs for young programmers — T. B. Lee — Understanding AI — 28 Août 2025

Legal Issues

Problem 7

How can we guarantee the protection of intellectual property against the illegal exploitation of data for AI training?

Training AI models involves leveraging various types of media to teach these systems, and very often, that media is protected by intellectual property rights. As a result, numerous lawsuits are currently underway, and most recently, OpenAI managed to antagonize a large part of the Japanese industry with Sora 2 (as well as the Japanese government).

To avoid these legal issues, companies developing AI models are trying a new approach: using their users’ data. This now includes LinkedIn (Microsoft), Instagram & Facebook (Meta), and X at the table, but also Slack, Figma, and even Tumblr.

More information on this problem:

Problem 8

How can we adapt the principle of intellectual property in the face of AI-generated works?

Under French law (following the EU AI Act), the rule is simple: a creation entirely produced by AI cannot be subject to copyright because AI is not human. However, if AI is used merely as a tool, copyright then belongs to the author. Beyond this apparent simplicity, one question throws everything into doubt: how do we distinguish between an AI-generated work and an AI-assisted work?

A striking illustration of this issue is the famous case of Jason M. Allen, who won an art competition in 2022 with a piece generated by AI (image I2 above). Following this event, he attempted to register his work with the U.S. Copyright Office, which refused because it was AI-generated. This raises a moral debate on several points:

- If AI-generated works are rejected, what happens when works are not disclosed as AI-generated? Conversely, will human-made works be rejected for being too similar to AI creations?

- If copyright applies only to works created by humans, what about works produced by algorithms and recognized as those of Vera Molnár or Manfred Mohr?

- At what point should we consider a work as AI-assisted rather than AI-created? Does applying a simple color filter by a human make the work human?

Such questions are nearly impossible to resolve without suitable detection tools. And while these tools do exist, they are already discouraged in academic contexts due to a high error rate.

More information on this problem:

- [FR] IA générative et créations de mode : quels enjeux juridiques ? — G. Makoundou — La Grande Bibliothèque du Droit — Juillet 2024

- [EN] The AI Act Explorer — Future of Life Institute

- [EN] Can Works Created with AI Be Copyrighted? Copyright Office Issues Formal Guidance — E. Gourvitz, S. L. Ameri — Ropes&Gray — Mars 2023

Social Issues

Problem 9

How can we prevent artificial intelligence from becoming a catalyst for spreading misinformation?

Social networks have a direct impact on our world. Spreading false information therefore has real consequences. For example, marketing campaigns on Facebook had a direct influence on the 2016 U.S. presidential election, and conspiracy theories are dangerously proliferating through social media echo chambers.

Alongside these developments, tools for detecting fake news continue to evolve, but the damage is already done, and some sociologists and political scientists argue that we have entered the era of post-truth — where lies are no longer shocking and truth is no longer essential.

In this complex context, it is crucial to understand that AI will have a significant impact on several aspects of truth. Its use drastically reduces the time needed to create information — writing, layout, editing, publishing — whether it’s a textual, graphic, or even audiovisual information. As a result, platforms like YouTube are facing a growing flood of content that is partially or entirely AI-generated. Moreover, due to our inability to distinguish between generated and non-generated information (despite improving methods to address this issue), misinformation has taken on a new dimension since 2023 with the rise of AI-generated images.

More information on this problem:

- [EN] AMMEBA: A Large-Scale Survey and Dataset of Media-Based Misinformation In-The-Wild — N. Dufour, A. Pathak, P. Samangouei, N. Hariri, S. Deshetti, A. Dudfield, C. Guess, P. H. Escayola, B. Tran, M. Babakar, C. Bregler — arXiv — Mai 2024

- [EN] A Guide to Misinformation Detection Data and Evaluation — C. Thibault, J.-J. Tian, G. Peloquin-Skulski, T. L. Curtis, J. Zhou, F. Laflamme, Y. Guan, R. Rabbany, J.-F. Godbout, K. Pelrine — arXiv — Août 2025

Problem 10

How can we prevent artificial intelligence from being used for psychological and moral harm?

Incidents of cyberviolence are already numerous. In a 2021 survey (image I3), 41% of French respondents reported having experienced cyberviolence. This figure rises dramatically and systematically for people from minority groups and for young people. While the sample is insufficient to determine conviction rates, we can estimate an approximate figure through an assumption: gender-based and sexual violence is considered more serious than cyberviolence. Following this logic, and considering that fewer than 10% of gender-based and sexual violence victims file complaints and fewer than 10% of those complaints lead to convictions, we arrive at a very low rate — less than 1%.

In this context, artificial intelligence has dangerously simplified access to harassment tools. First, AI can be used as a generator of harassment media. Criminals can easily create content as effective, or even more effective, than traditional media. This trend is evident in France through a flood of racist gorilla-themed videos aimed at harassing people from other ethnic backgrounds.

Second, AI is an extremely effective tool for creating deepfakes. As a result, the number of cases involving the generation of explicit content without consent has exploded. Illegal “nudification” tools have multiplied, with varying business models but often targeting the same clientele: men. These tools are used on influencers as well on ordinary women. Worse still, they are also used on children and teenage girls by other children but also pedophile criminals. And, as noted in the legal issues, the lack of detection capabilities prevents any reduction in the number of victims.

Finally, AI models are trained using datasets created by labeling thousands of images (a process that, incidentally, exploits millions of people). Consequently, AI will define things the same way we do, which means reproducing the same societal stereotypes. This includes racist biases about Australiana and African American English, as well as misogynistic biases. It is worth noting, however, that LLMs seem to have recently improved on issues such as transphobia (with the exception of Grok).

More information on this problem:

- [EN] Analyzing the AI Nudification Application Ecosystem — C. Gibson & D. Olszewski & N. G. Brigham & A. Crowder & K. R. B. Butler & P. Traynor & E. M. Redmiles & T. Kohno — arXiv — Novembre 2024

- [EN] AI can be cyberbullying perpetrators: Investigating individuals’ perceptions and attitudes towards AI-generated cyberbullying — W. Pei, F. Wang, Y. T. Chua — ScienceDirect — Septembre 2025

- [EN] ‘Australiana’ images made by AI are racist and full of tired cliches, new study shows — T. Leaver & S. Srdarov — The Conversation — 14 Août 2025

Science Issue

Problem 11

How can we prevent artificial intelligence from contributing to global pollution in scientific articles?

For several years now, the world of scientific publishing has been plagued by the massive release of fake scientific articles. Considering the number of retractions, the figure has risen from around 1,000 in 2013 to over 10,000 in 2023. This surge is driven by growing corruption in the field, involving paper mills and profit-hungry journals, enabling certain researchers to secure promotions or bonuses — or worse, manipulate public opinion by providing misleading scientific arguments. For example, nearly 10% of scientific articles on cancer are believed to have been written by these paper mills since 1999. Or also this beautiful list of more than 500 retracted articles on COVID-19.

Faced with these alarming numbers, researchers have begun organizing to fight these fake publications. But the innovation brought by AI in generating diverse content has accelerated the trend of publishing fraudulent or redundant articles, impacting especially the field of medicine.

More information on this problem:

After all this reading, the arrival of AI may seem like nothing more than a descent into the hell of uncertainty. Yet this uncertainty can be interpreted in different ways: it is the absence of a path to follow, but also the existence of a direction to create. It is this direction that we must define together, as a human society, not as a liberal enterprise. This list of problems represents a set of possibilities on which we can discuss, reflect, and debate, in order to build a future where AI fulfills Keynes’s ideal.

It is therefore our duty to reason through these issues. We must understand what has not been understood and think about what has not yet been defined. By doing so, we can design a healthy framework around AI — a framework that is sorely lacking today and currently filled by corporate and entrepreneurial decisions. It is up to us to choose how AI should impact us, and the time to begin this work is now.

Thanks to Nicolas, Julien, Olivier, Théo, Marine, Maxime and Élodie for their help with proofreading and their advice, and thanks to ekino for giving me the time to write this article that matters so much to me. 🫶🏻

Feel free to follow the ekino Medium account and add a like if you want to see me writing more things like that. And take care of you!

AI vs. its problems was originally published in ekino-france on Medium, where people are continuing the conversation by highlighting and responding to this story.