Introduction to NLP (Part II)

Cet article est aussi disponible en français.

Introduction

This is the follow up of the first post where we discussed the basis of NLP (Natural Language Processing). In this second post, we are going to focus on discussing existing NLP frameworks and what type of data/use cases are processed using NLP.

Frameworks

Python

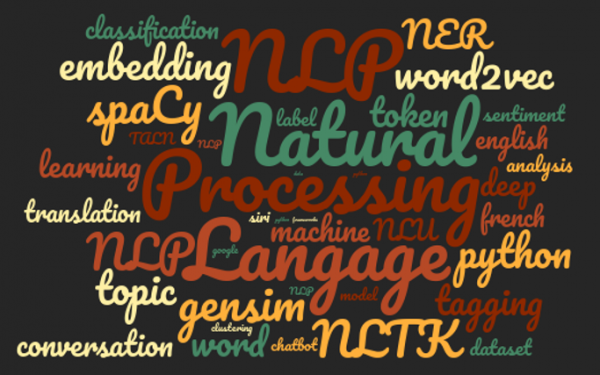

Python is a programming language commonly used to for NLP. There are few libraries available to work with natural language. The most famous and widely used python libraries are Gensim, NLTK and more recently SpaCy.

For a while, NLTK (Natural Language ToolKit) was the standard Python library to do NLP. It includes classification, POS-Tagging (fr), stemming (fr) and tokenization (words and sentences) algorithms. It includes multiple data corpora and allows to do NER (Named-Entity Recognition) and sentiment analysis. Even with regular updating, this library has become outdated (2001) and has started showing limitations in terms of performance.

A more recent library (2015) named SpaCy has emerged since. This library written in Python and Cython provides the same type of tools as in NLTK : tokenization, POS-Tagging, NER (Named-Entity Recognition), sentiment analysis (work in progress), lemmatisation (fr). It also presents pre-trained word vectors and statistical models in multiple language (english, german, french and spanish for now).

The third library called Gensim is slightly different from the two discussed above in that it is specialized in Topic Modeling. It implements multiple statistical models of Topic Modeling (Latent Dirichlet Allocation (fr) or LDA, Latent Semantic Indexing (fr) or LSI, Hierarchical Dirichlet Process or HDP) and also provides possibility to carry out Word Embedding (fr).

Apart from the three libraries discussed above, there exists other libraries which can be useful to do NLP. Examples include, the visualization library called PyLDAvis which allows to easily visualize Topic Modeling results (see figure 3. in the first post for an example). An another important library is the famous Machine Learning library, Scikit-Learn. With Scikit-Learn, we can not only do tokenization but also features extraction. Moreover, it provides several different classification algorithms. Lastly, we have Keras. It is an API written in Python designed to build and train neural networks (fr) models. These models can be used to do texts classification. Keras also provides Word Embedding tools.

Others languages

For those of you who are interested in using Java for NLP, there exists packages and support for these languages. Few open source tools written in Java are :

- Stanford CoreNLP implements POS-Tagging, NER, sentiment analysis, tokenization for english, arabic, chinese, french, german and spanish.

- Apache OpenNLP perform similar tasks, tokenization, sentence segmentation POS-Tagging, NER, languages detection. It supports multiple languages (danish, english, german, spanish, dutch, portuguese) but not for every task.

We can also mention the programming language R, very often used in Data Science. Multiple R packages are dedicated to NLP :

- cleanNLP presents a set of functions to normalize (preprocess) texts,

- coreNLP provides a generic interface to use the rich tools of the Java library Stanford CoreNLP,

- openNLP provides an interface to use the tools of the Java library OpenNLP,

- NLP presents basics functions for NLP,

- topicmodels allows to do Topic Modeling with the LDA method,

- text2vec provide functions to do tokenization, vectorization, Topic Modeling (LDA, LSI) and Word Embedding (GloVe algorithm).

To finish, we can mention few closed source tools developed by Web giants : IBM Watson, Microsoft Azure (fr), Google Cloud Platform (fr), AWS (fr). All this platforms possess tools and API to do NLP.

Data in NLP

Uniqueness of NLP

As we have seen in the previous post, NLP tasks often use Machine Learning (fr). One of the key points in the efficiency of a Machine Learning model is the quality of the data. Since textual data are very complex to use. The preprocessing of the data is a long and tricky problem in NLP.

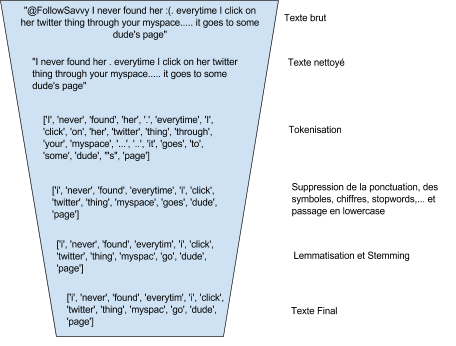

It often starts with a cleaning process which can be different depending on the data source. For example, data from social network or forums need removal of smileys, emojis, urls and other special features (hashtags, mentions…). The contractions of words and onomatopoeia can also be a problem.

After the cleaning step, data needs to be normalized : tokenization, lemmatization, stemming, removal of numbers, punctuation, symbols and stopwords (fr), conversion in lowercase. Not all of these transformations are always needed. It depends on the final application and it is important to test different approaches to identify the most efficient transformations.

Lastly, Machine Learning methods need quantitative data. So the textual data needs to be transformed to numbers. There are several approaches to do this. The Term-Frequency (fr) (TF) method counts, for each text, the number of occurrences of each token of the corpus. Each text is characterised by an occurrence vector. This representation is called Bag-Of-Word (or sac de mots in french).

# Input<br />

sentence_1 = "John likes to watch movies and Mary likes movies too"<br />

sentence_2 = "John also likes to watch football games"

# Vocabulary<br />

['to', 'Mary', 'John', 'movies', 'likes', 'watch', 'and', 'too', 'games', 'also', 'football']

# TF vectors<br />

sentence_1 : [1, 1, 1, 2, 2, 1, 1, 1, 0, 0, 0]<br />

sentence_2 : [1, 0, 1, 0, 1, 1, 0, 0, 1, 1, 1]

The TF-IDF (fr) (Term Frequency-Inverse Document Frequency) method is also based on the TF approach mentioned above, but not only this results in a vectorial representation of the texts as in TF, but instead of creating occurrences vectors (vectors of number of occurrences), it creates weight vectors. In other words, it allows less frequent words, which are the most discriminant, to have more weight.

However, these methods have two major disadvantages : the vector size increases with the vocabulary and a lack of significance for the vectors. Indeed, the position of the words in the vocabulary being arbitrary, it does not describe a potential relationship between them.

A recent method called Word Embedding (fr) aims to erase these problems. With this technique, the vector dimension is a chosen parameter. In addition, the vectors are built in a manner that takes into account the context of the words. This way, the words which appear in similar context will have closer vectors (in the meaning of vectorial distance) than words which appear in different context. It allows to capture the potential semantic, syntactic or thematic similarity of the words.

# Calculation of the similarity between the word vectors man and woman<br />

man = nlp_en('man')<br />

woman = nlp_en('woman')<br />

man.similarity(woman)

# Output<br />

0.74017445384912972

# Calculation of the similarity between the word vectors tree and dog<br />

tree = nlp_en('tree')<br />

dog = nlp_en('dog')<br />

tree.similarity(dog)

# Output<br />

0.32623763568487174

This method transform each words of a text in a numerical vector. To obtain a numerical representation of a text with this method, there are multiple strategies. We can represent a text by an array of vectors, one vector for each word of the text. We also can represent a text by a single vector corresponding to a combination (the mean by example) of the word vectors in the text.

There is another complication when you work with natural language, it is the diversity of languages. We may think that methods and features are independent of the languages but they have unique specificities that need be taken into account. By example, a n-grams based model is less robust for languages with a very free word order. For some asian languages like mandarin, japanese and thai, words segmentation is not as clear as for other languages. So the segmentation work will be of greater importance.

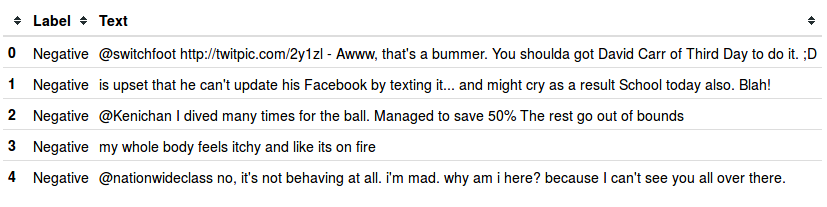

Data Source

The type of NLP application/use-case puts a limit on the characteristics of the data. For example, a classification application needs labeled dataset whereas Topic Modeling or clustering methods can work with unlabelled datasets.

Several frameworks provide tools to build Word Embedding models from its own corpus of texts, but it is also possible to use sets of pre-trained word vectors.

Unsurprisingly, english is the language with the largest amount of resources. It is relatively easy to find datasets (labeled or not) and pre-trained Word Embedding vectors in English on web. The data sources for other languages are certainly less provided but under development. For example, SpaCy has several pre-trained word vectors sets in english but also one in french, one in german and one in spanish. We can also mentioned Facebook which has developed the Word Embedding algorithm FastText. We can thus find vectors pre-trained with FastText from Wikipedia in 294 languages. Other pre-trained word vectors sources are referenced in the bibliography.

It is also possible to create its own dataset by doing Web Crawling (Wikipedia is a very rich multilingual texts source) or using retrieval news or tweets APIs and then labelled the data if necessary.

Conclusion

This introduction which was split between two posts presented a detailed overview on what is NLP and the tools available for developing NLP applications. This will be followed by a series of other posts where we will discuss the topic in more detail providing concrete examples of python implementation and discussing codes. We will be basing our posts on more focussed and concrete subjects such as Topic Modeling, Word Embedding and texts classification.

Bibliography

- Sklearn Documentation about features extraction with textual data : http://scikit-learn.org/stable/modules/feature_extraction.html#text-feature-extraction

- Article about NLP Python libraries : https://elitedatascience.com/python-nlp-libraries

- Pre-trained word vectors of more than 30 languages : https://github.com/Kyubyong/wordvectors

- Pre-trained word vectors of french language : http://fauconnier.github.io/

Images credit

All the images are under licence CC0.

Lire plus d’articles

-

6 Minutes read

Introduction au NLP (Partie II)

Lire la suite -

6 Minutes read

Introduction au NLP (Partie I)

Lire la suite